Changelog

Follow up on the latest improvements and updates.

RSS

🚨

Critical Backdoor Discovered in Upstream xz/liblzma Repository and Release Tarballs (versions 5.6.0 and 5.6.1)

🚨The backdoor injects malicious code into the liblzma library during the build process, targeting x86-64 Linux systems.

🔒

Impact:

The backdoor can affect applications that use liblzma, including OpenSSH, which may experience slower logins or other issues. The backdoor can modify the RSA\_public\_decrypt function in OpenSSH, potentially allowing unauthorized access or remote code execution.

🕵️

Discovery:

The backdoor was discovered by a security researcher who noticed odd symptoms around liblzma on Debian sid installations. The researcher observed that logins via ssh became a lot slower and valgrind errors were present. After further investigation, the researcher discovered that the upstream xz repository and the xz tarballs had been backdoored.

💻

Technical Analysis:

The backdoor is present in the distributed tarballs and not in the upstream source of build-to-host, nor is build-to-host used by xz in git. However, it is present in the tarballs released upstream, except for the "source code" links, which are generated directly from the repository contents.

The backdoor is designed to work on glibc based systems and targets only x86-64 Linux. The backdoor checks for specific conditions, such as the build environment and the presence of certain files. The backdoor can modify the RSA\_public\_decrypt function in OpenSSH, potentially allowing unauthorized access or remote code execution.

🛠️

Mitigation:

A script is available to detect if the ssh binary on a system is vulnerable. Users are advised to upgrade their systems as soon as possible. Red Hat has assigned CVE-2024-3094 to this issue.

🔒

Conclusion:

The discovery of this backdoor in the upstream xz/liblzma repository and release tarballs is a significant concern for the security community. The backdoor has the potential to affect a wide range of applications that use liblzma, including OpenSSH. It is crucial that users upgrade their systems as soon as possible to mitigate the risk of unauthorized access or remote code execution.

#infosec #cybersecurity #vulnerability #backdoor #xz #liblzma #openssh

Earlier this month, NIST published a detailed document titled SP 800-204D, offering concrete steps for secure software development.Recommendations from the U.S. federal government about securing software supply chains can be generic — but experts say new guidance published by the U.S. National Institute of Standards and Technology (NIST) offers actual concrete steps.

The latest guidance is NIST’s final guideline for software providers on implementing the building blocks of supply chain security assurances into CI/CD pipelines. It recommends that organizations prioritize a series of actionable measures, including establishing baseline security requirements for integrating open-source software and expanding oversight of provenance data.

See our blog post for a detailed analysis: https://lstn.dev/nist

We're excited to introduce our newest team member Rafael, who was previously the core developer of Tracee at Aqua. Bringing in deep domain expertise in eBPF-based sensing for cloud-native infrastructure, Rafael complements our team's existing strengths in security observability–joining Lorenzo and Leo who previously worked on foundational OSS tooling like Falco and kubectl-trace in the past.

With three of the world's leading eBPF observability experts on our bench, we are confident in our ability to deliver a best-in-class solution for modern AppSec observability and serve as a powerhouse resource for our customers.

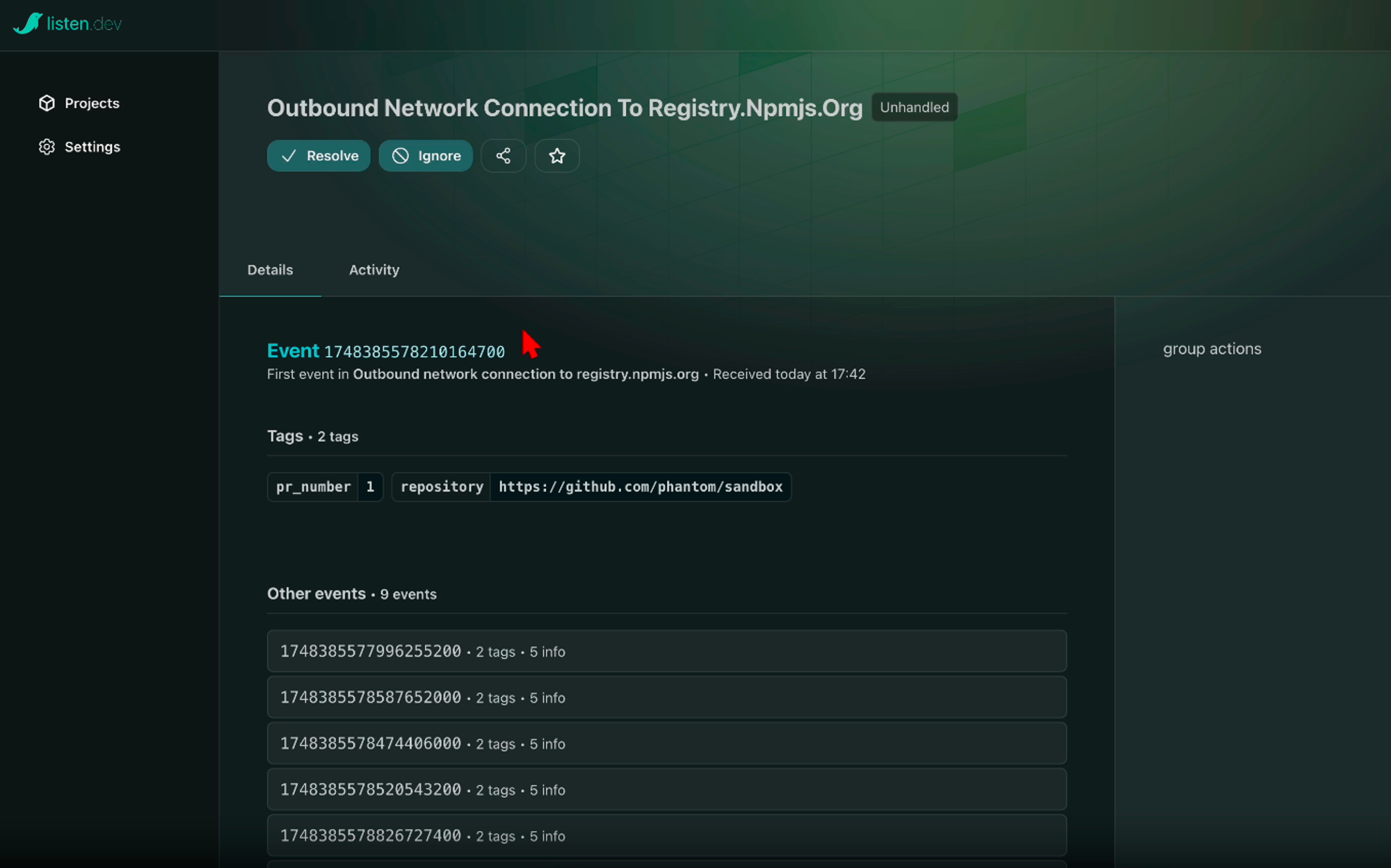

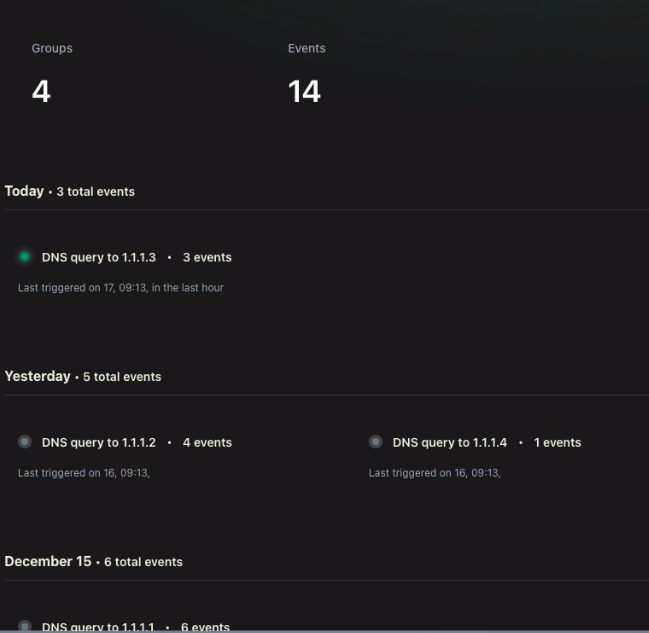

We've been developing an event-driven architecture to power use cases in security observability. Using our behavioural analysis backbone, this system collects security events throughout the SDLC from various collectors, groups them based on baseline intelligence and user guidance (such as whitelists), and surfaces alerts in case of anomalies.

Events are served through a highly configurable webhook API, allowing for action triggers and import into custom sinks (such as SIEM)

A sneak peek into our new interface:

Use case example

The npm package puppeteer interacts with Chromium in its regular state. At every build, our sensor captures this behavior and groups similar events to establish a baseline. In case we observe a change (such as a new transitive dependency introducing a suspicious network source), an alert is triggered through a webhook with relevant context around the event—including tags representing owner, execution trace diff, service of origin, and other details gathered from our collection. We intend this to be highly configurable, including the ability to import the JSON into your own SIEM.

We wrap up 2023 with significant improvements to product experience.

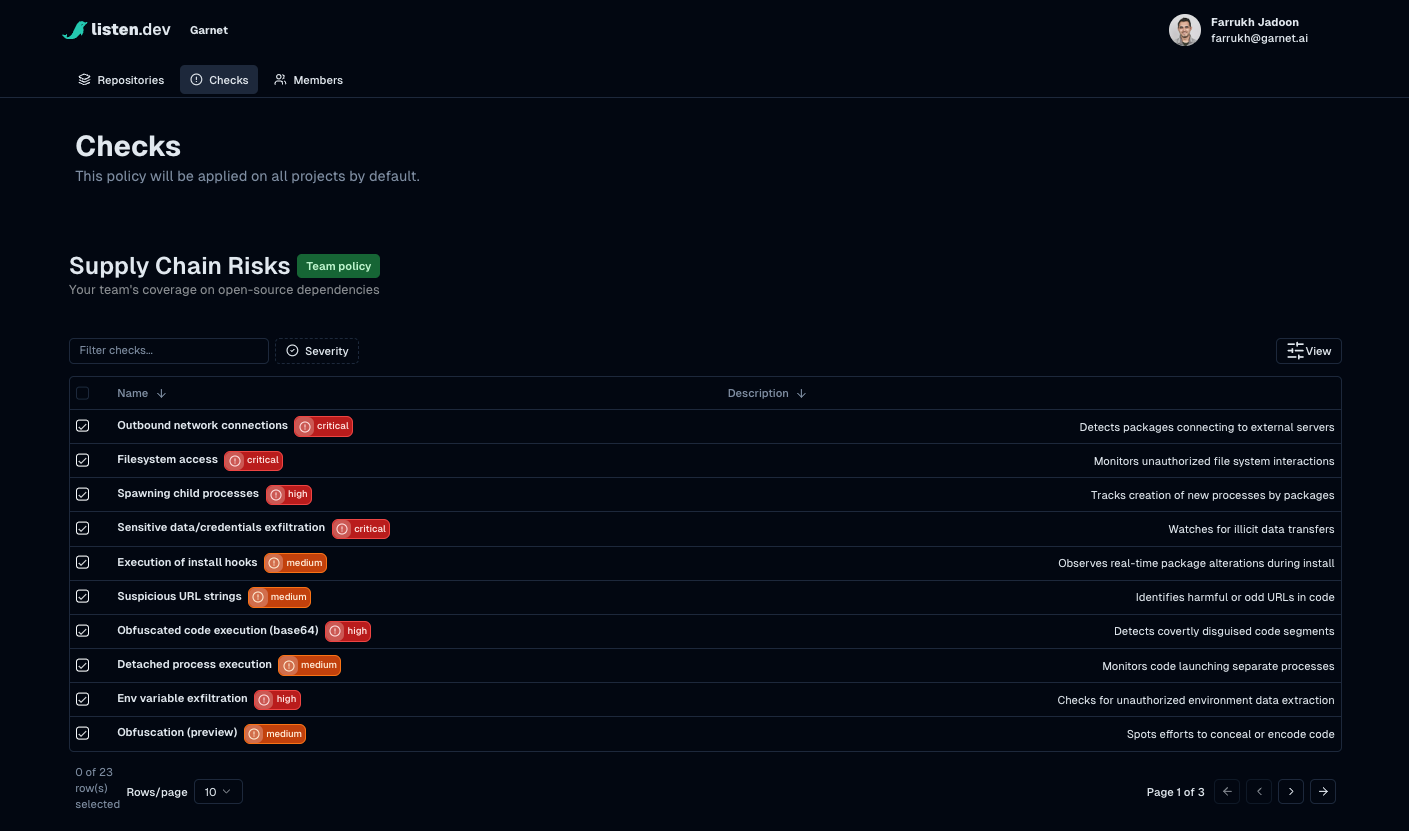

Detections to cover a range of modern supply chain risks

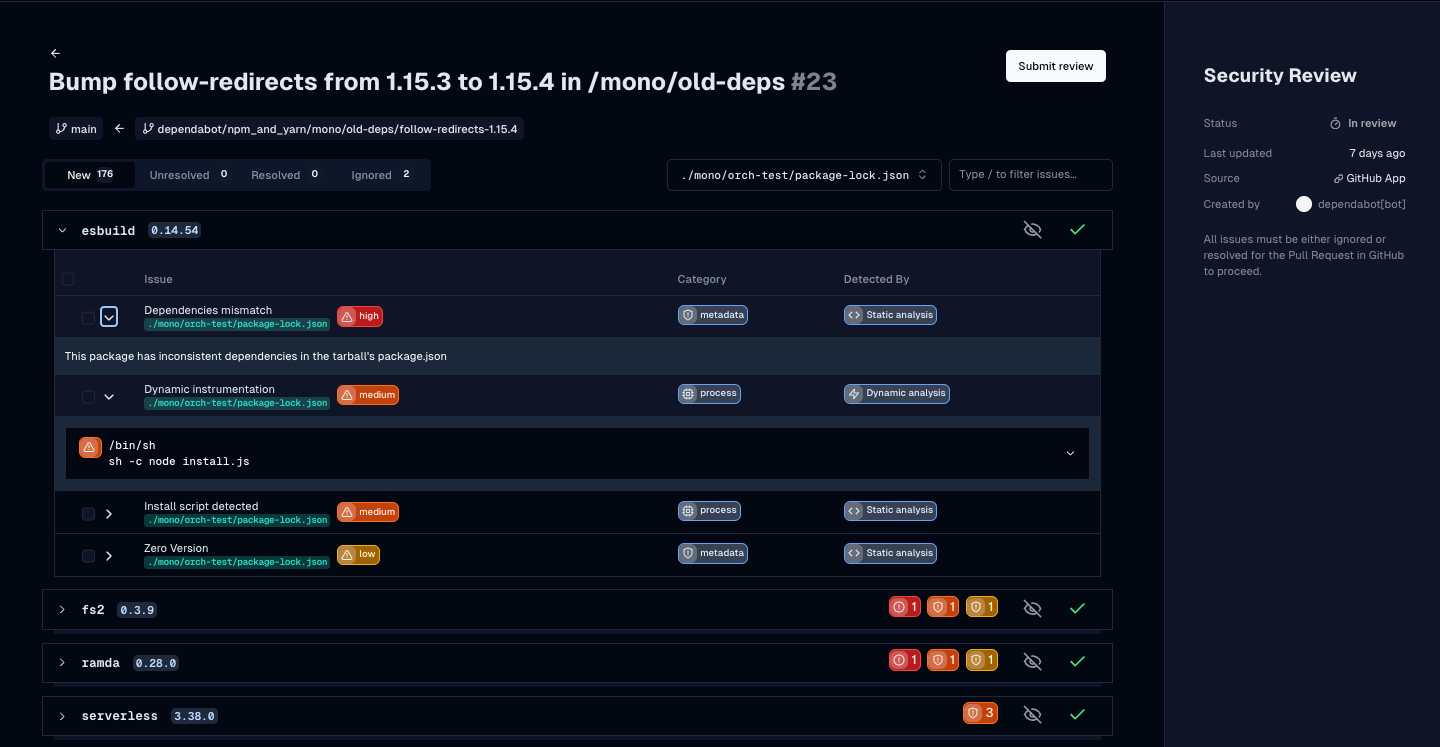

Checks provide detections across a range of supply chain risk vectors through static, metadata and dynamic analysis. We've rolled this out for npm in our public beta, and this provides visibility and intelligence into your open source dependencies and their posture.

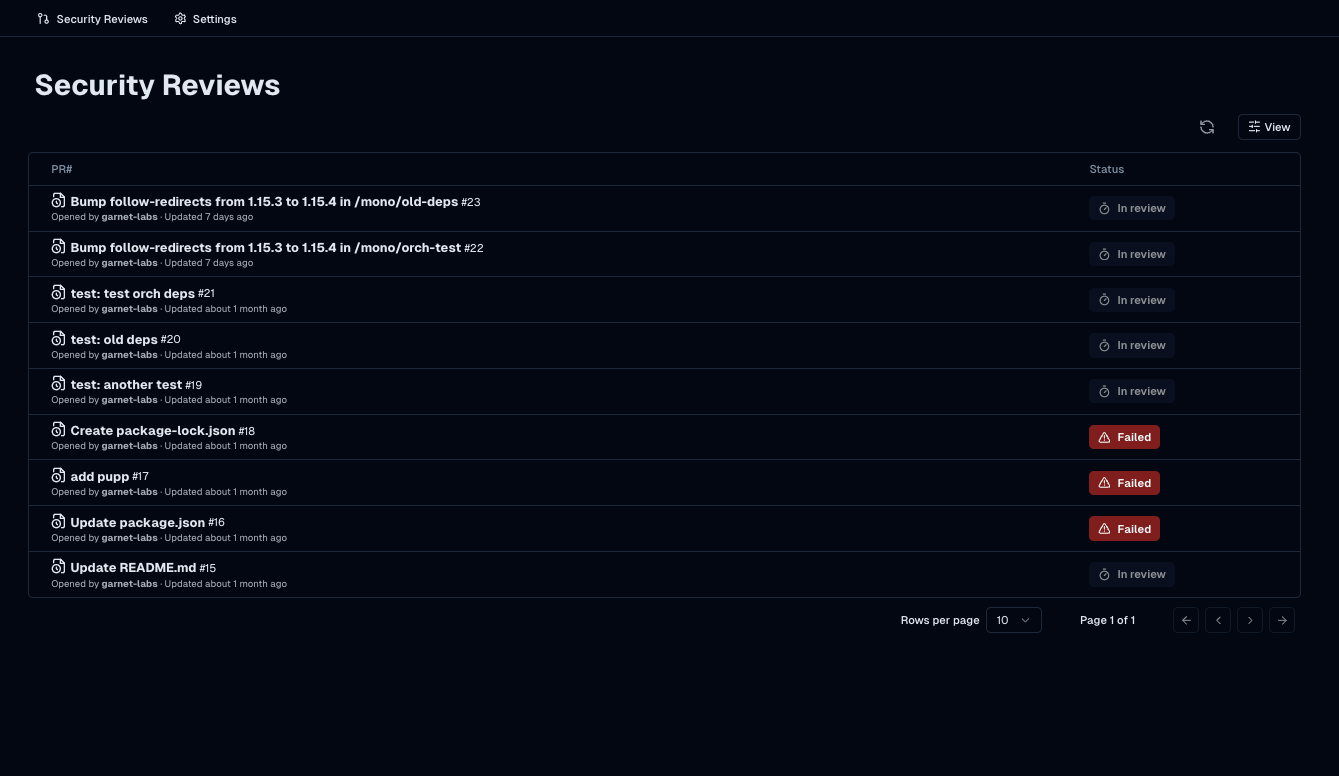

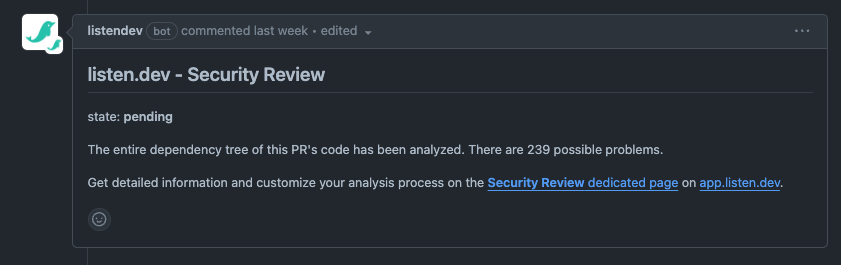

Security reviews for each PR

Work with your team and close the loop on security issues for every change in your dependency tree

This is synced with GitHub where context is summarized in a PR comment

The issue review workflow

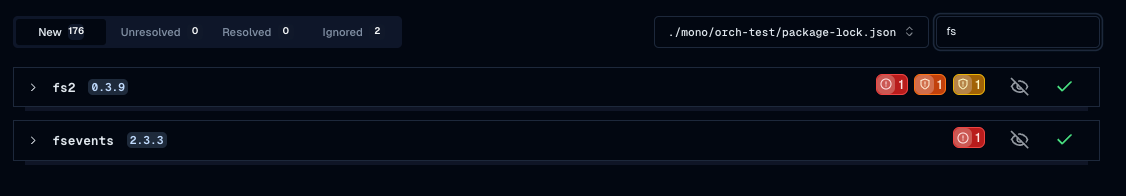

View and triage issues at the dependency-level, with in-line context to dig deeper.

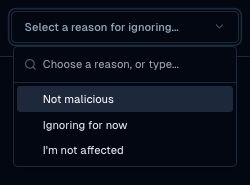

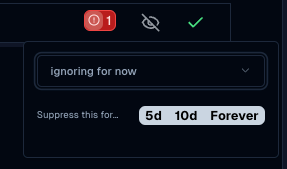

Triage flows

: snooze and resolve issues, record context

UX improvements

: Filtered views, monorepo scopes and tabs

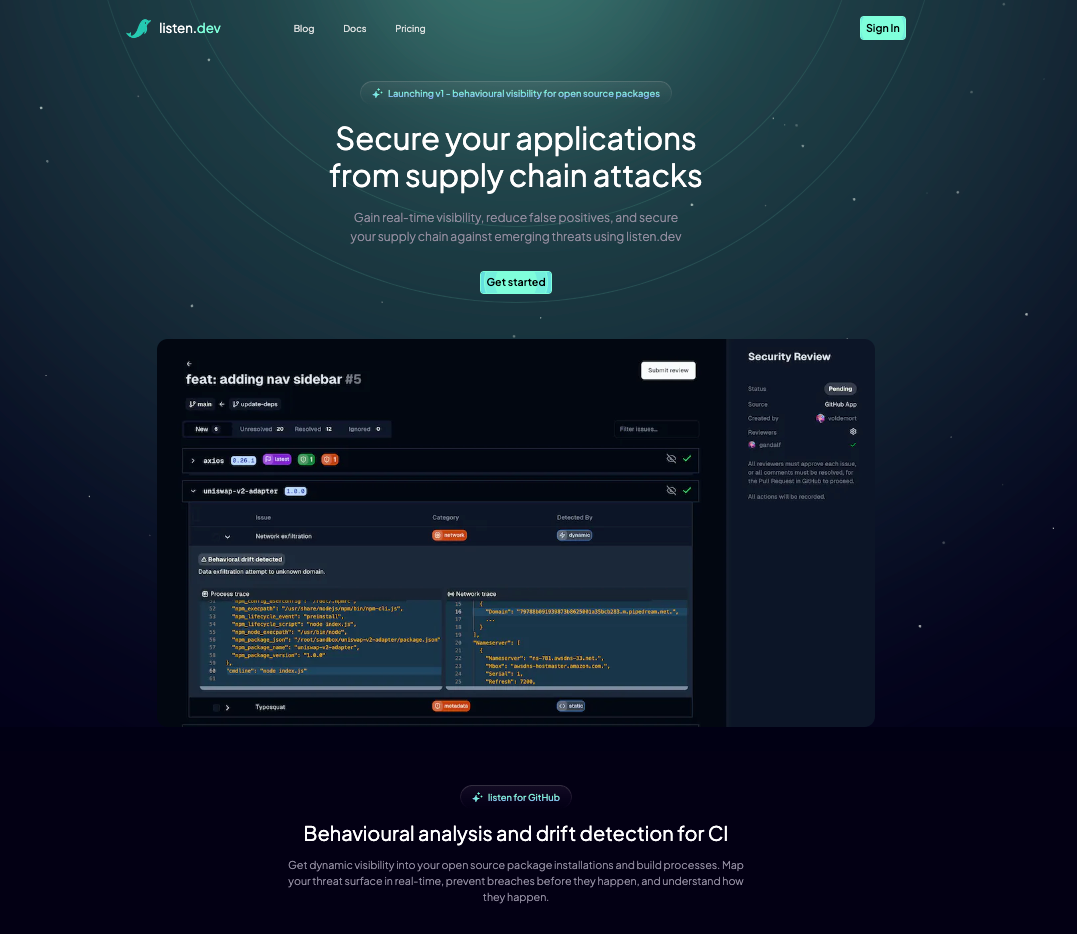

We've launched a new website for listen.dev, showcasing our fresh take on the AppSec with a developer focus.

The listen.dev research team published an investigation on the recent high-profile attack on the Ledger crypto wallet–making waves as one of the most sophisticated breaches affecting the crypto and npm ecosystems.

Links:

As we kick off the new year, we wanted to share a recap of what we've achieved so far at listen.dev, how we're thinking of our future roadmap and whats next:

Merging upstream intelligence with downstream context

Our focus so far has been on building the upstream view. This involved developing in-house machinery to collect a range of granular signals from upstream open source. This upstream context is powered through comprehensive collection (including static heuristics, metadata, and dynamic signals) along with analysis infra which indexes, monitors and sandboxes package installations for every new module published on public npm and pypi registries today. Over the past year, we've battle-tested and optimized it for performance and cost.

The next phase of our roadmap involves building the downstream view. This will focus on agents that collect context from your downstream environments, like CI pipelines, where builds and QA take place. Currently, we capture dependency lists from lockfiles through GitHub, and will be extending this coverage through a CI-based sensor in the coming months.

Downstream Focus as a Key Differentiator

Unlike traditional SCA tools, which primarily concentrate on upstream package analysis covering open source risk. However, this static, top-level view isn't sufficient to guard against modern attacks.

Our approach aims to provide a comprehensive AppSec solution for Dev/Sec/Ops offering:

- Holistic Visibility: real-time coverage of your entire application environment and threat surface, including its complete software supply chain, during install and build time.

- Actionable Alerts: intelligent insights like drift detection and anomalies from behavioural baselines help you focus remediation efforts

- Reduced False Positives: by correlating upstream and downstream context and tailoring policy definitions to your risk profile, we ensure alerts are relevant and critical to your teams.

This not only provides the first line of defense for supply chain attacks—preventing them before impact—but also helps teams understand how they happen through detailed forensics to develop a proactive security posture.

Case study: Uncovering the Ledger Attack through Dynamic Behavioural Analysis

Our research team was down in the trenches with the recent Ledger attack. We published a post on the topic detailing our investigation and how we were able to detect the attack using listen.dev discussed in our blog post).